Everything I know about getting buy-in

Or: How to justify technological decisions

Introduction

In this blog post I try to formulate a loose framework we use when trying to justify technological decisions, mainly when introducing new technology to our stack, but also when choosing what problems to invest time into.

This isn’t a bulletproof step-by-step methodical decision tree, but more of a guideline that helps us understand the problem, solution, risks and tradeoffs, and then communicate that understanding to get buy-in from stakeholders.

The process consists of the following parts:

Why - What’s the problem we’re solving?

What - What’s the proposed solution?

Risks - What are the risks of the proposed solution?

Mitigations - How can we mitigate the risks?

Cost - What’s the effort/cost?

Tradeoffs - Evaluating everything we know so far.

Why now? - Timing and priorities.

How will we know we were right?

This process is non-linear: You could start with the problem, but you could also start with the solution and find the problems it solves. Then as you gain more understanding of the problem and tradeoffs you could go back and find better fitting solutions. When you consider risks and mitigations, you could find ways to relax the original requirements which in turn, could open new simpler solutions. Finally, we have to stop and make the best decision with the information we have at the time.

Note: hand written by a real human. Added the figurative AI images just to break up the huge wall of text a bit 🙏.

Why? - Motivation

It’s not uncommon to first find the hammer, and then find the nails.

Sometimes we discover a new technology, a pattern, a different way of doing things, that makes us see potential issues with the current stack. We think we should start using the new thing, but what problem that we have would it really solve?

We can usually classify it into two categories:

It solves a problem we have with an existing solution.

It opens new possibilities to do things we can’t do currently.

Solves an existing problem

We can start asking questions to better define the problem:

Can we articulate the problem?

What are we observing and why is this bad?

Who cares and why?

How frequent is this? Is this a daily/weekly/monthly thing?

What is it costing us?

What’s the business impact - is it hurting end users?

What’s the impact on productivity - does it take a lot of dev attention and context switches?

How bad is it when it happens?

Is it solving a now-problem, or preventing a problem in the future?

What are the current mitigations? If there aren’t any, why?

The goal of these questions is to understand what exactly we’re trying to solve, and if this is a real problem.

Let’s look at some examples from recent history:

We’re running Kafka-Connect to CDC some Postgres tables to Kafka topics. On restart it takes about 10 minutes to come up because it has to replay its internal offsets topic due to a bug in compaction. During these 10 minutes CDC isn’t running and some of our event-sourcing topics don’t get updates, which means we’re serving stale data to users. But the data is mostly very slow changing so impact on users is negligible, and it’s very rare that we would actually restart Kafka-Connect.

This is mostly a problem for someone who doesn’t know this is going to happen, they restart Kafka-Connect and the connectors won’t come up right away, which will result in panic and thinking they messed something up. But unless there’s a trivial 10 minute configuration change we can apply, it’s probably not something we should invest time into. It rarely happens, and when it does, there’s no observable impact on anything.

Every couple of months we’re getting a free disk space alert on one of our critical databases, the reason is that the cleaning job hasn’t been tuned recently and it’s not keeping up with the rate the disk fills at. When this happens it requires immediate attention from a dev to run multiple commands manually and get it to free the disk space, if we miss the alert, disk space can run out and/or we’ll have to extend the disk and pay more for storage because shrinking the disk back is a lot of effort.

It’s annoying but straightforward, while there’s no user impact, the risk is pretty high and could potentially result in an outage. It’s also forcing a dev to context switch and to be annoyed. This is an important but not an urgent problem, and the effort to fix it is probably low. We don’t have to drop everything and work on it now, but schedule time to work on it soon.

We’re getting more frequent alerts on service X, it used to be once every couple of months, and now it becomes a weekly thing.

Every time this happens the downtime is longer than before because of the scale that is constantly growing.

When it’s down, we’re losing user data, and as the downtimes become longer we lose more and for more users.

This impacts 30% of our users that use the flow, but also creates gaps in data which makes other data initiatives harder.

If we don’t fix this in Y time, the system is expected to grind to a halt.

This sounds like a ticking time bomb that we must fix as soon as possible.

We’re using an older version of a package, we don’t need to upgrade the package for any feature/improvement,

but if we don’t upgrade soon then when we’ll have to upgrade it will be much harder since it could be multiple major versions apart. The solution is to extend our automatic tests so we can have confidence we can upgrade packages and not change how the service behaves, and also to integrate monitoring package version updates, likeDependabot.

This prevents a future problem:

a security vulnerability in the outdated package

potentially having to jump multiple versions when you’re forced to update the package - which is riskier and requires more work.

This is a whole class of problems which are especially tricky because we’re preventing problems before anyone feels the pain. It really depends on the engineering culture and other external forces (like regulation) to determine how easy it would be to justify working on it given other priorities, but we should start implementing some small incremental steps in the right direction.

We’re storing events in a format that’s not conducive to compression, in our current scale this means we’re paying 80% more than we would have if we used X. This translates into $YYYY per month, or in $ZZZZ potential cost savings.

It’s always nice to save money, but a cost optimization isn’t always a problem in itself right now:

We could be optimizing for quick growth and exchanging money for velocity, once we grow enough we’ll start reducing costs.

The engineering effort and potential risk and complexity might not be worth the potential savings.

The opportunity cost could be higher, we might make more money working on something else, than save money by optimizing.

But sometimes this is a problem:

If we can’t release a feature until we can get the per user cost under some dollar amount.

We deployed some change and started bleeding money for no good reason.

It was cheap when we were small, and as we grew it became more and more significant.

Finally, there are low effort high impact cost optimizations that save a lot of money without investing a lot of time and risk, these are almost always easy to get everyone excited about.

Unlocking blocked opportunities

The second class of justifications is what we would have gained if we had this tool/technology. For example: If we move to using technology X we could do Y, where Y could be solving new kinds of problems and enable new features for our users.

But remember that capability itself isn’t the justification, the blocked business value is.

Let’s look at some examples:

If we use real-time streaming statistics calculation we could have triggers set to do something when an aggregated statistic passes some threshold. It’s something we can’t do with polling because we have millions of users and we can’t re-calculate the statistics fast enough.

But like in the previous section, consider what problem is it solving?

Do we have a business opportunity that it would open?

Ask product managers if they had this, what feature would we actually build? What would be the impact?

Why aren’t we building these features? Maybe it’s not important enough?

Are we building some approximation of these features? for example we’re polling instead of getting updates via a push? What would be the business benefit of getting the data faster to the user?

For the above example, the answer could be:

If we had real-time statistical triggers, we could identify that a user on our site is about to get frustrated and requires human assistance in real-time. By not doing that, we’re leaving $XXXX money on the ground.

Would it allow us to model existing problems in a different way?

What’s wrong with how we model it now? What problems does the current way cause?

If the new way doesn’t solve actual problems, it’s just different, not better.

For example:

We have an event-driven system: there are triggers that fire at the end of the day to signal that we should start calculating statistics for users’ events since the last day. We’re using RabbitMQ to send the triggers to workers, which query the database that stores the events, calculate statistics per user, and store the results. This happens on a per-user basis, and the events database is also used to serve the events to the mobile app. Since most of the triggers happen around the same time, it creates a thundering herd problem and load on the database, with some impact to query latency.

We could remodel this problem from a real-time, event-driven problem, to an offline, batch-processing ETL problem. Store the events in a data lake on S3 using Iceberg, run a batch job that fetches the data based on time range and not based on the user, calculate and update the statistics in a serving database that just serves it back to the app.

Moving to the ETL reduces load from a production user-facing database, shifts the problem to the offline ETL space which is easier as it doesn’t have to be always on like an online system, but more importantly this different way of modeling the problem also opens new possibilities, after modeling it as a DAG, we could easily add more steps that depend on the statistics step, for example after we’re done calculating statistics, we could feed them into an LLM to generate additional insights, and have another step after that and so forth - without adding load and risk to the user-facing online systems.

Solution

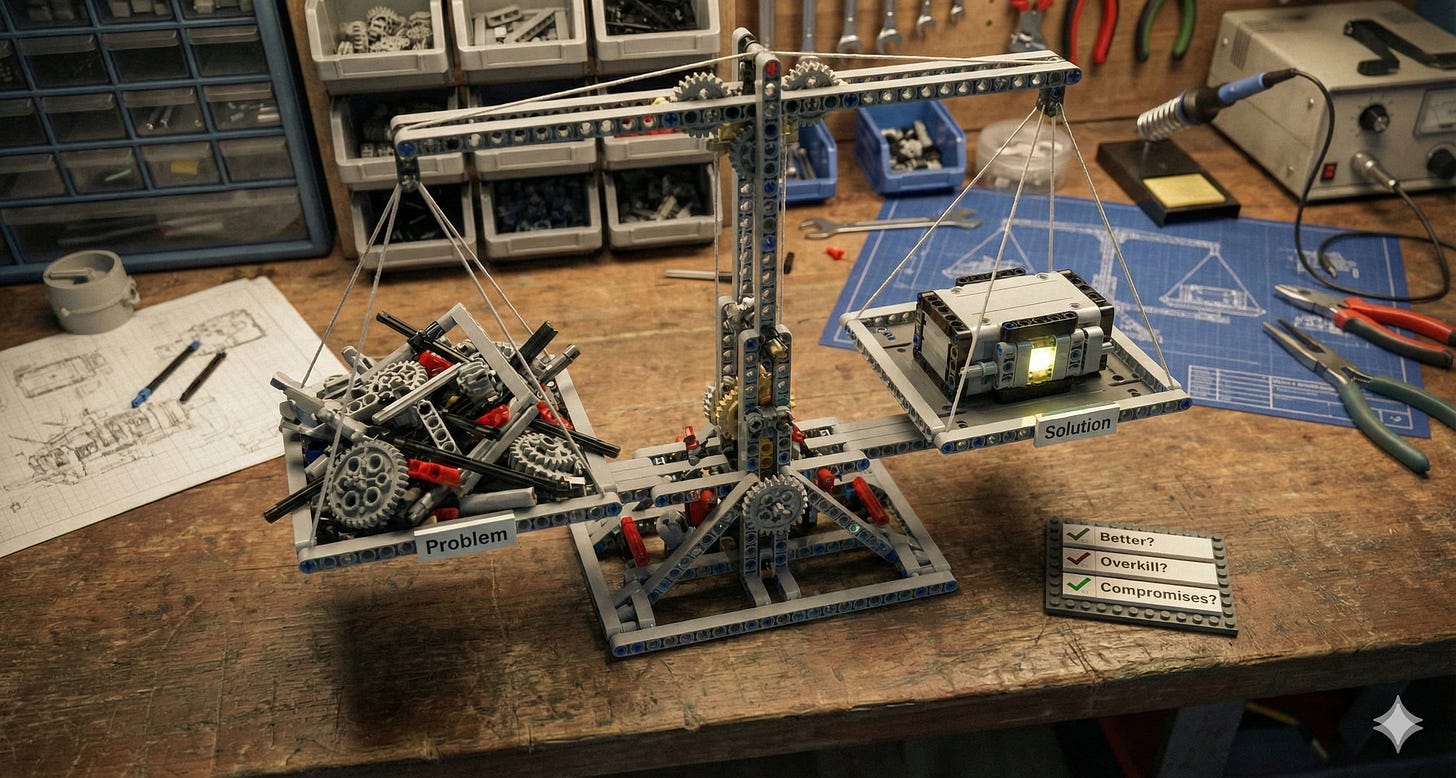

Now that we know exactly what problem we’re solving and why, does our initial solution still stand?

Is it actually better, or just different?

If it’s better, in what way? How much better is it? What’s the marginal benefit?

Is this an overkill? Does the new thing do much more than we actually need?

What compromises does the solution make? For example consistency for performance?

Is this something that’s great for 100x our current scale but just overhead at the current scale?

Are we rolling it ourselves? Is this a solved problem?

How do we know we’re not trading one set of problems for another?

The point of this step is kind of a sanity test to initially validate our solution:

We found an interesting tech

We think it could solve some problems we have

We validated that those are real problems

Now we validate that the new tech actually solves them in a meaningful way compared to what we currently have.

In the next steps we’ll continue evaluating the solution in more detail, but of course it also applies to any other ideas we’ll come up with along the way.

Risks

Assuming everything goes well in the previous steps, we have a real problem the new tech seems to solve in a meaningful way, but it’s a change and it’s new, so it’s risky.

The rule of thumb is if we don’t have hands-on experience with the new thing in a very similar problem space - expect surprises.

We can divide risk into these main categories:

Wrong assumptions

Missing features - we assumed it can do X, but X isn’t yet available, or in some beta state.

We assumed something works a certain way, but it doesn’t - for example: consistency or ordering guarantees.

We assumed it would be compatible with other parts - but it’s not and requires some translation layer.

It’s actually optimized for different use cases and doesn’t fit the way we model our data.

X is available as beta, but at the last moment they decide to remove the feature.

We didn’t understand the pricing model.

Scale

It works in a demo with 10 users, but will it work at our production scale?

Can it handle the expected requests/second at our required latencies?

Will it work with our dataset sizes? Can it handle our query/insert patterns?

Is there a risk for unexpected high costs?

We assumed ingress traffic is free, but didn’t expect we’ll have to pay for egress SSL handshake traffic.

Is it a managed solution and we’re paying per usage, or is it self-hosted and we’re paying for compute/network/storage?

Changed assumptions

Our current system could already depend on some implicit assumptions about it’s part, even a small change like adding read-replicas to a database, could now create problems where we update a record but then read it from a replica and get the old version - this is fine if you plan for it, but if the system expected everything to be strongly consistent and it’s not, it could require a lot of unexpected effort and bugs.

More work than expected

We estimated the integration of the product will take X, but in reality we’re discovering issues while we’re integrating and it requires more effort than expected. It’s challenging to estimate effort in the day-to-day case, it’s extra hard when dealing with completely new things.

Steep learning curve, onboarding other developers takes more time than expected, our team becomes a bottleneck supporting other developers trying to use/debug the new thing.

Third-party dependency

We went for a managed solution, but when we have some production issue the third-party provider takes a very long time to respond, meanwhile our system is down and users are experiencing an outage.

Bugs

It’s possible we’re hitting some rare use case that currently has an open bug and it doesn’t seem like it’s going to be fixed soon.

Mitigations

If we want to be innovating, gain trust and buy-in, we need to find ways to hedge against these risks. Everyone understands there are risks, but everyone also doesn’t like surprises, here’s some ways to increase predictability:

Reducing uncertainty:

Understand the problem / solution better.

Create a proof of concept:

The goal here is to get some hands-on experience with the thing, which helps to identify gaps docs and theory and concrete implementation.

By building a simplified version of the solution to see if it feels right and what’s missing.

Run some stress tests, see where the bottlenecks are, where it breaks.

If possible, send a subset of mirrored production traffic, or at least a snapshot of real events - it could find some edge cases you didn’t consider.

If possible, let it run over a longer time - does performance change, does it crash?

How does it recover from a crash?

How would it feel to maintain it, make changes?

How much does it cost? Are there any unexpected costs? like networking, CPU, storage?

Get feedback:

Share your idea with other colleagues and ask for their thoughts, don’t wait for a architectural review meeting to get your first feedback.

Pay attention to gaps and leaps in your explanation, this might be a signal you’re missing something.

Pay attention if the explanation sounds too complex, it could be that it is.

Schedule a pre-mortem meeting, a thought exercise where you imagine the change caused a big outage, and you figure out why.

Talk to people using the tool, if it’s managed, read reviews, look for Problem with X, if it has similar alternatives, see why? What X’s problems do they solve?

Validate the pricing model and have a sizing exercise with their sales, give your current scale, the 2x scale, 4x scale, etc.

If you’re doing a PoC, measure the cost for the scale of the POC and then multiply the measurement to get a ballpark of true cost - validate these numbers with the vendor.

Reducing blast radius:

Start small and have a plan.

Limit the scope - we don’t want to refactor the whole thing, but find a small opportunity where we can introduce the technology

The idea is to start small and gain real-world experience, we expect there will be issues, but they’ll be limited to a single place.Have a fallback plan - better if it’s automatic:

If you’re replacing an existing thing, you can run them side by side and have something that detects failures and falls back to the previous thing.Have a rollout plan - try it on a subset of users, first in some sort of dry mode, then for real, add monitoring to make sure everything works as expected, then rollout to more users.

Relax requirements

It’s possible that we’re trying to solve too much.

Can we simplify the solution if we remove some of the requirements?

Can we split the problem and handle the 99.9% in one way, and have a different solution for the 0.1%?

Can we start with a simplified MVP solution and add some of the other features later if needed?

For example:

Let’s say we need to serve some user data, we have 10k requests per second and we require to serve it under 100ms

But we also know that 99.9% of the data is from the last 24h, if we can relax the requirements and say that “cold” data, older than a day, will get served at 500ms latency, we can have a fast solution for a small fraction of the data (last 24h, small, fast, expensive) and store everything else on S3 (historical, lots of data, slower, cheap).

Reducing sunk-cost risks:

Agreeing in advance when to quit.

Limit your efforts - set deadlines for how much we’re willing to invest before we decide it’s not for us.

Have a rollback plan - if we decide this wasn’t a great idea, how easy is it to roll back?

Sometimes it’s cheaper to take a risk than to mitigate it. Make sure everyone is aligned on what risk we’re willing to take.

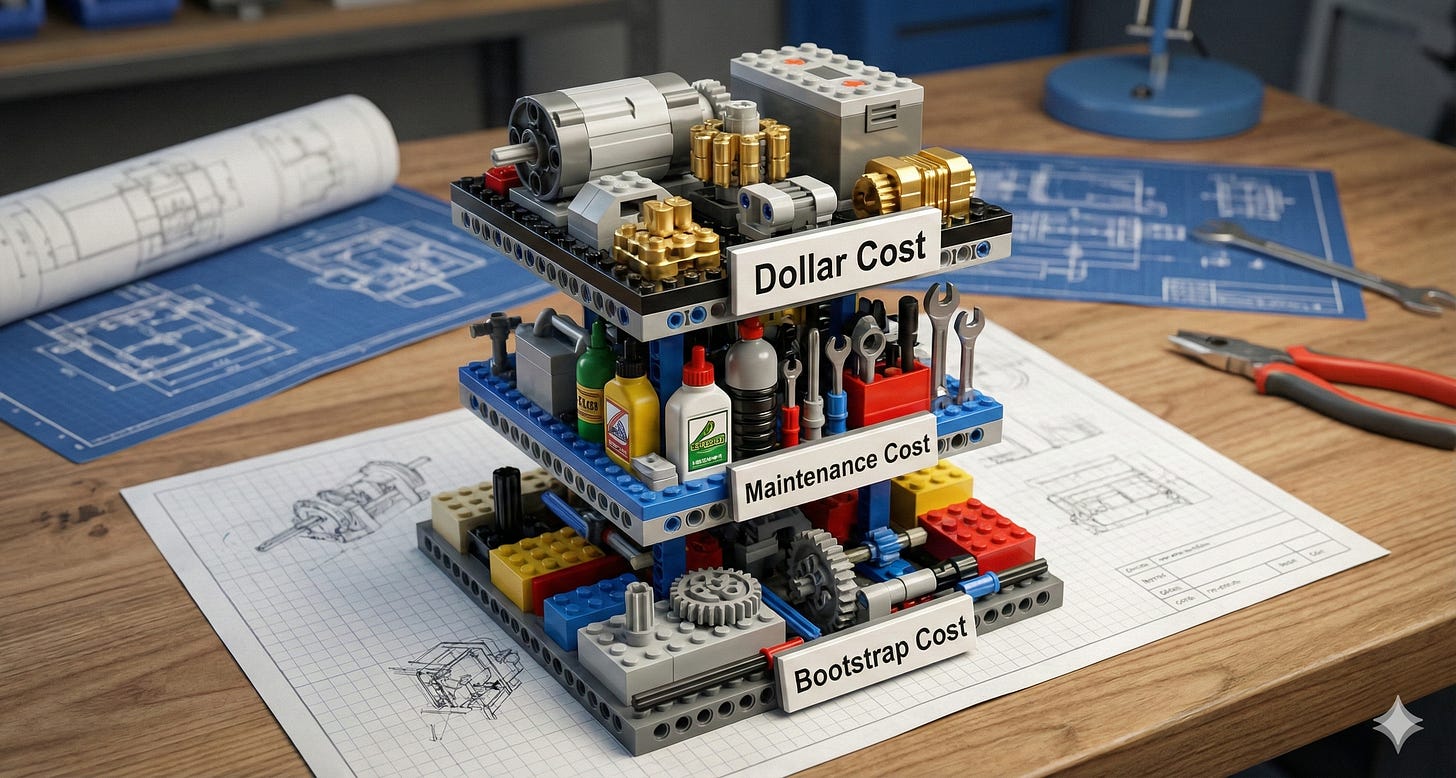

Effort & Cost

By this point you should already know:

What problem are we solving?

What are the risks of the proposed solution?

What are the mitigations?

Next, let’s understand the cost of doing this change.

The dollar cost

Is this a managed solution?

Subscriptions/licenses fees.

Infrastructure costs like compute, traffic, storage, etc.

Unit economics: Cost per user, per event, per log line, etc.

Initial bootstrap cost

How much time/effort do we need to invest to go from 0 to 1?

Setting up the new solution.

Doing a PoC.

Learning about it.

Initial back-and-forth negotiating the contracts.

Trying various features while modeling the problem in different ways.

Ongoing maintenance cost

How much time/effort will we invest in the long run?

Version upgrades - this could be especially painful.

Potential production issues.

Adopting and migrating to the new way

Would it be cheaper to pay someone else? managed solution?

Tradeoffs

Ultimately, when evaluating tradeoffs remember that tradeoffs are always contextual:

What’s currently important for our company?

Two solutions could have different properties or provide different capabilities

One solution could provide strong-consistency and the other very high throughput.

Without context these things have no intrinsic value.

If strong-consistency is what we must have, then it doesn’t matter how good the other solution is, it just doesn’t solve our problem.

It could even be context dependent on a per service basis:

For the most business critical services we value availability above all else and willing to pay in complexity to get a more resilient system

For others, we might value time-to-market and maintainability, and can tolerate a rare maintenance/outage.

By this point we should have a very good understanding of the problem space, the constraints and requirements and some potential relaxation of those in favor of reduced risk or effort. We should also have multiple solutions, each with a different property profile, effort estimation and risks.

For example:

We have many sources that generate different types of events, it could be user input, IOT sensors like temperature and humidity, algorithmic insights based on video analysis, etc. We’re serving these events to users via different APIs. The problem is that adding a new event source and serving the new event to users requires a lot of boilerplate work.

One option is to create some libraries or framework that allows to produce and consume these events, also materialize them for serving with different options, to a database, a Redis, or even have some real time aggregations. The framework also takes care of creating the public REST interface that allows users to query events. The end result would be many micro-services, each handling some subset of related events, each independent from the others - so in case of a problem, only a small subset of all events becomes unavailable.

Another option is to create just one service, stream all events to this service, have all the logic to handle events written once in this service and in its REST layer, and allow filtering based on different event types. But if it goes down, everything goes down.

What’s better? If we optimize for availability and resiliency, the first option, if we optimize for time-to-market and reducing complexity, the second option sounds easier.

The key here is not to fall in love with some solution, but to be objective and evaluate the solutions based on the properties and capabilities we care about for this specific thing.

Why now? Timing and priorities

The easy case

We have a problem we already decided we’re solving now and the proposed solution solves the problem within the budget of time and risk. - Let’s go!

The common case

In other cases, when the problem is not urgent or the effort is significant, we need to justify why we think we need to solve this now, consider the opportunity cost (what else could we be doing with this time) and priorities, in this case, what really helps is having an architectural vision and finding opportunities that bring us closer to that vision, for example:

We have a bunch of ad-hoc ETL scripts running on

Jenkinsand we want to introduce a proper ETL service likeAirfloworDagster, now let’s say we’re working on some project that requires creating another such ad-hoc script but it’s complex enough and has a DAG like structure with steps depending on other steps - the marginal difference between rolling your own and boot-strapping something likeDagsteris small, like 2-3 extra days of work, and it’s clear that the gained setup and knowledge would return the investment in the future.We’re using parquet files, but we want to start using

Iceberg- next time there’s a need to start writing aKafkatopic toS3, we can invest a couple extra days of work into figuring out how to do it withIceberg, communicate the benefits and hedge the risks by limiting how much effort we’ll invest before quitting and going back to what we know.We’re tasked to do something and we’ve identified that we already have 4 very similar versions of the same thing that we’re maintaining separately; we realize we have a good enough understanding of the problem and how we can generalize it; we propose the more unified and generic solution; understand what would be the effort to migrate to this service and what would be the benefits, like simplifying our architecture. We invest a little bit more effort to create adapters that make migration basically a drop-in replacement for other teams and get a buy-in from their managers for the small time investment to migrate to the new service.

Work to avoid problems

There’s also the case where we want to do a preventative change to avoid a problem:

Is this a real problem? Our legacy code doesn’t support a newer version of a database we’re using.

Is there a real deadline? AWS will force upgrade our database in 3 months.

Are there other mitigations? Can we pay for extended support?

In this case, we need to choose between taking time to upgrade everything we need in the next 2 months (to leave a month of headroom) - or if there’s something much more important, we can consider paying the extended support.

Bottom line

There’s no universal formula, but a few habits are helpful:

Understand the priorities of your organization - context - which are constantly changing.

Have a good plan ready - you know the problem, risks, mitigations, tradeoffs, etc.

Always look for opportunities to execute the plan or a part of it - what’s the long term vision, what are some future projects, etc.

So it’s really not just about technology: it’s about talking to people. What does the CTO care about? What PMs are planning? What other teams are working on? What are the important problems for other people?

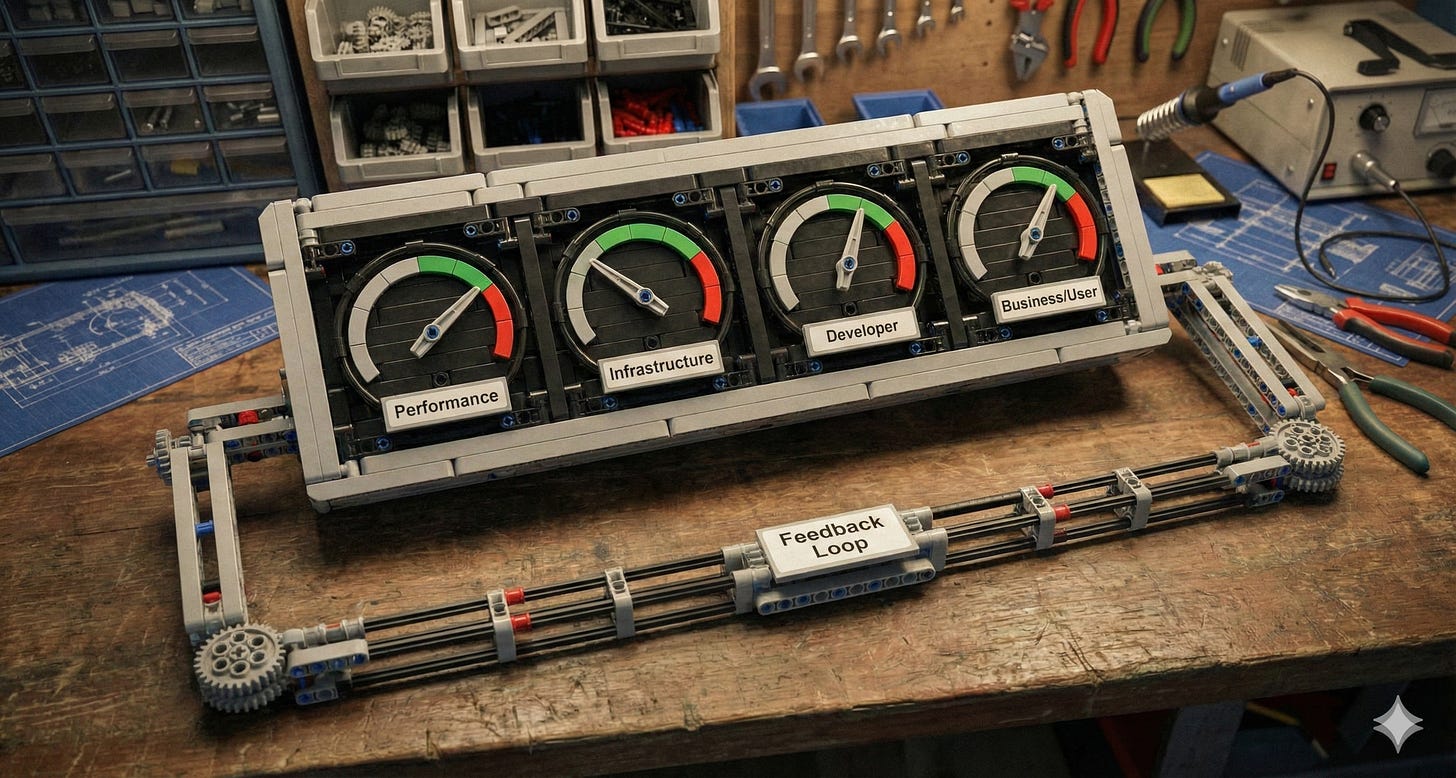

How do we know we were right?

This is the easiest step to miss, but it’s important for two reasons:

Trying to identify the

success metricsahead of time usually sheds more light on the problem we’re trying to solve - if there’s no meaningful way to measure it, or if the margin of success is kind of small, it could be a signal that we don’t understand the problem enough, or that it’s really not important. But the fact we don’t know how to measure it doesn’t mean it doesn’t exist, this is especially true for problems of the preventing class. This just means we have to be more creative.Feedback - everything in this post are rules of thumb, heuristics and intuition - to improve our thinking we need to do things and learn what works and what doesn’t.

So, we’ll define some success metrics:

Performance metrics: latency, request rate, CPU usage, etc.

Infrastructure metrics: cost, downtime, bugs, data loss, etc.

Developer metrics: time to market, bug time, unplanned work, etc.

Business/user metrics: ratings, adoption, etc.

Not everything can be captured by numbers, sometimes it’s also useful to collect feedback, like developer experience: Do they enjoy using the new thing? Do they feel more productive? etc

And finally, make sure to document your decisions! The framework in this post should make it clear why we’re making some change, what were the alternatives, why we chose this instead of something else, what were the tradeoffs we considered at the time and why we decided to optimize for x and not for y, etc.

Then later, in 6 months we can review this and ask ourselves did we make the right decision? The right decision doesn’t mean that we were correct, it means given what we knew back then, did we have the right mental model and thought process to choose the best option given the data we had - and then, work on improving our mental toolkit for making better decisions - it could be things like identifying biases, but it could also be investing more time into gathering data and doing better POCs, etc.

Full Example

(1) Why?

We have an availability problem where most of our micro-services depend on data served by one service (service x).

This caused 7 system-wide outages this year when this service was loaded or unavailable.

It’s directly impacting user experience and we’re seeing an increase in bad reviews.

It also puts a spotlight on how services are coupled to each other, and a small change in one place could have a devastating effect on other places - Thus this is also hurting developer velocity as changes are less predictable and riskier.

(2) Solution

We can mitigate the issue by reversing the data dependency direction, and push the data to the micro-services instead of them pulling it on demand.

To facilitate that we can use Kafka topics to send state changes and the services can consume the state and store it locally, thus removing the call time dependency on service X.

Basically, implement a sort of event sourcing.

This solves the dependency problem because services will have the data they need ahead of time, and service x won’t be part of their SLAs.

But it’s not free, we’ll be duplicating some data and would have stale data if service x stops sending updates for some reason.

(3) Risks

The main risks are that this is a new technology (for us) we don’t have in-house expertise/experience in, but also:

We’re also changing some flows that were designed with

read-after-writeconsistency in mind, and we’ll be changing them toeventual-consistency

meaning there might be some staleness in data, for example when service X is down or when there’s a lag in consumption of the state changes.We could by mistake leak some internal implementation details - for example if we just CDC a table currently being served behind an API layer

(the API is the contract, not the table columns)We could have production issues or limitations with

Kafkathat we’ll only discover in a later stage.Duplication of data across many services

Unknown unknowns.

(4) Mitigations

There are multiple ways to mitigate the risks:

Starting with only one slow-data-changing flow that we understand and know can tolerate some data staleness (but impactful and important flow)

We could use something like

outboxpattern, and write business events (for example, user updated its name, instead of doing a CDC on the production table)We can use managed

Kafkasolutions and not maintain our ownWe can run the new and old solution in parallel, calling service X and also fetching from local state built from the Kafka topic - comparing them

We can simulate different errors like data staleness or Kafka node restarts and have QA test how this affects user experience

We can roll it out gradually

Consult with experts, and/or map known issues - Kafka is very mature technology, we can read a lot about it.

(5) Effort / Cost

There are 3 main efforts:

Learning about event-sourcing - reading some blog posts, familiarizing with the jargon, identifying common pitfalls, etc.

Initial setup - having

service xproduce events to a Kafka topic, and consuming it from another service and storingQA - mapping user flows around this change and testing what will go wrong if data becomes stale.

Considering all of the above, the effort estimation for “0 to first flow” is about 4 sprints.

(6) Tradeoffs

Other solutions to the original problem could include things like:

More aggressive caching on the micro-services - but we’ll have to take care of cache invalidation, which is a hard problem in itself

Making service X more available - for example moving its data store to a distributed database and adding more compute power - but this is even more complex and more work

The main benefit of the proposed solution is that it’s an architectural pattern we can apply in other cases (give examples).

It solves the cache invalidation problem since data changes are pushed to the consumers, and since service X stops being a point of failure and needs to handle a lot less calls and its infrastructure can remain simple.

It also creates islands of mostly independent services, consuming global state changes from topics, so services don’t need to know or depend on other services, this goes hand in hand with a squad like structure and simplifies some questions of service ownership.

While the proposed solution adds complexity in some sense (eventual consistency is harder than strong consistency, debugging a distributed system, dealing with Kafka vs REST) it also removes complexity by removing dependencies between services, and moving to handling the data when it changes and not when it’s requested. In our case most of the data rarely changes, and services only need a subset of the data service X returns.

So for us, for some specific business critical flows, this is a good tradeoff.

(7) Why now?

We already had 7 disruptions and in light of this, the company decided to have availability and downtime reductions as an OKR.

So it’s already decided that we’re going to do something, the question is whether we do a simpler mitigation now and do the Kafka idea later?

Meaning - can’t we just add more cache to the micro-services and/or increase the instance sizes, add more read-replicas to service x?

We could, but there are some advantages of doing the Kafka change now:

It’s more work, but not significantly more compared to making

service xmore available or adding more cache layersWe’re growing and adding more services, which also depend on

service x, if eventually we’ll have to move to the new architecture it would be more work to undoIf we start now we’ll have more expertise by the time we really need it

The new flows we build will have eventual consistency upfront, instead of retro-fitting it after the fact, which is riskier.

(8) How do we know we were right?

What are the success metrics?

Zero outages related to

service xdependency within 6 months of full rollout95th percentile latency for affected flows improves by 40%+ (no more network hop)

Load on the migrated service doesn’t impact

service xor other services that depend on it.

Conclusion

There are so many interesting things we could be doing with our limited time, so it’s essential we focus our energy on what’s really important. Hopefully this framework can help you figure out what you’re actually solving, understand the risks and come up with mitigations, and then evaluate the costs and tradeoffs contextually, finally measure, collect feedback, and train our decision making muscle.

Good luck!